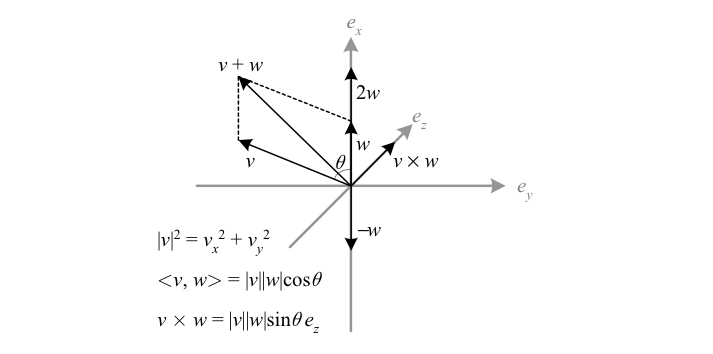

We can obtain further structure by generalizing the properties of vectors in a Cartesian coordinate system. A vector space (AKA linear space) is the algebraic abstraction of the relationships between Cartesian vectors, and it is this structure that we formalize and build up to.

| Vectors | Scalars | Scalar example | |

|---|---|---|---|

| Left \({R}\)-module | Abelian group \({V}\) | Unital ring \({R}\) | Real matrices |

| \({R}\)-module | Abelian group \({V}\) | Commutative ring \({R}\) | Integers |

| Vector space | Abelian group \({V}\) | Field \({F}\) | Real numbers |

| Cartesian space \({\mathbb{R}^{3}}\) | 3-vectors | \({\mathbb{R}}\) |

Notes: The abelian group of vectors has elements denoted \({u,v,w,}\) with operation \({+}\) and identity \({\mathbf{0}}\). The ring of scalars has elements denoted \({a,b,c,}\) with operations \({+}\) and \({\times}\), and special elements \({\mathbf{0}}\) and \({\mathbf{1}}\).

For any scalar \({a}\) and vector \({v}\), scalar multiplication defines a map to another vector \({av}\) such that:

- \({(ab)v=a(bv)}\)

- \({(a+b)v=av+bv}\)

- \({a(v+w)=av+aw}\)

- \({\mathbf{1}v=v}\)

A left (right) module defines scalar multiplication only from the left (right), while for the other structures scalar multiplication from either side is equivalent (thus for a right module the above scalar multiplication rules are modified). Modules allow us to generalize real scalars to rings of scalars that lack multiplicative inverses. For example, any abelian group \({V}\) can be made into a module over the ring of integers if we define \({av\equiv v+v+\dotsb+v}\) (\({a}\) times), and any unital ring \({R}\) can itself be viewed as a \({R}\)-module. A module homomorphism, i.e. a map between modules that preserves vector addition and scalar multiplication, is called linear.

Every vector space \({V}\) has a basis, a linearly independent set of vectors \({e_{\mu}}\) whose linear combinations span all of \({V}\). The dimension of \({V}\) is the number of vectors in a basis. In a given basis a vector \({v}\) can then be expressed in terms of its components \({v^{\mu}}\):

\(\displaystyle v=\sum_{\mu}v^{\mu}e_{\mu}\equiv v^{\mu}e_{\mu} \)

Here the Einstein summation convention has been used, i.e. a repeated index implies summation. Bases thus generate a matrix for any vector space homomorphism (linear map). In particular, a change of basis can be represented by a matrix:

\(\displaystyle e_{\mu}^{\prime}=A^{\nu}{}_{\mu}e_{\nu}\)

Viewed as ordered sequences of vectors, the bases of \({V}\) can be split into two classes, each class consisting of bases related by a change of basis with positive determinant. A vector space orientation is then a choice of one of these two classes.

| Δ It is important to remember that a module may lack a basis or other intuitive features of a vector space. |

Over a given field there is a unique vector space (up to isomorphism) of a given dimension; for example \({\mathbb{R}^{n}}\) is the only \({n}\)-dimensional real vector space. In this book we will almost exclusively consider vector spaces over the fields of real or complex numbers. Such vector spaces can be obtained from one another, as follows. The complexification of a real vector space, denoted \({V^{\mathbb{C}}}\) (or \({V_{\mathbb{C}}}\)), substitutes complex scalars for real ones; thus the basis is left unchanged. The decomplexification (AKA realification) of a complex vector space \({W}\) with basis \({e_{\mu}}\) removes the possibility of complex multiplication of scalars, thus yielding a real vector space \({W^{\mathbb{R}}}\) of twice the dimension with basis \({\{e_{\mu},ie_{\mu}\}}\). Finally, given a \({2n}\)-dimensional real vector space \({U}\), one can define a complex structure on \({U}\), defined to be a linear transformation \({J\colon U\to U}\) that squares to the negative identity; a basis for \({U}\) is then \({\left\{ e_{\mu},J\left(e_{\mu}\right)\right\}}\), which determines an \({n}\)-dimensional complex vector space \({W^{J}}\) with basis \({e_{\mu}}\).